溫馨提示×

您好,登錄后才能下訂單哦!

點擊 登錄注冊 即表示同意《億速云用戶服務條款》

您好,登錄后才能下訂單哦!

這篇文章主要介紹了Python如何實現線性回歸和批量梯度下降法,具有一定借鑒價值,感興趣的朋友可以參考下,希望大家閱讀完這篇文章之后大有收獲,下面讓小編帶著大家一起了解一下。

示例

import numpy as np

import matplotlib.pyplot as plt

import random

class dataMinning:

datasets = []

labelsets = []

addressD = '' #Data folder

addressL = '' #Label folder

npDatasets = np.zeros(1)

npLabelsets = np.zeros(1)

cost = []

numIterations = 0

alpha = 0

theta = np.ones(2)

#pCols = 0

#dRows = 0

def __init__(self,addressD,addressL,theta,numIterations,alpha,datasets=None):

if datasets is None:

self.datasets = []

else:

self.datasets = datasets

self.addressD = addressD

self.addressL = addressL

self.theta = theta

self.numIterations = numIterations

self.alpha = alpha

def readFrom(self):

fd = open(self.addressD,'r')

for line in fd:

tmp = line[:-1].split()

self.datasets.append([int(i) for i in tmp])

fd.close()

self.npDatasets = np.array(self.datasets)

fl = open(self.addressL,'r')

for line in fl:

tmp = line[:-1].split()

self.labelsets.append([int(i) for i in tmp])

fl.close()

tm = []

for item in self.labelsets:

tm = tm + item

self.npLabelsets = np.array(tm)

def genData(self,numPoints,bias,variance):

self.genx = np.zeros(shape = (numPoints,2))

self.geny = np.zeros(shape = numPoints)

for i in range(0,numPoints):

self.genx[i][0] = 1

self.genx[i][1] = i

self.geny[i] = (i + bias) + random.uniform(0,1) * variance

def gradientDescent(self):

xTrans = self.genx.transpose() #

i = 0

while i < self.numIterations:

hypothesis = np.dot(self.genx,self.theta)

loss = hypothesis - self.geny

#record the cost

self.cost.append(np.sum(loss ** 2))

#calculate the gradient

gradient = np.dot(xTrans,loss)

#updata, gradientDescent

self.theta = self.theta - self.alpha * gradient

i = i + 1

def show(self):

print 'yes'

if __name__ == "__main__":

c = dataMinning('c:\\city.txt','c:\\st.txt',np.ones(2),100000,0.000005)

c.genData(100,25,10)

c.gradientDescent()

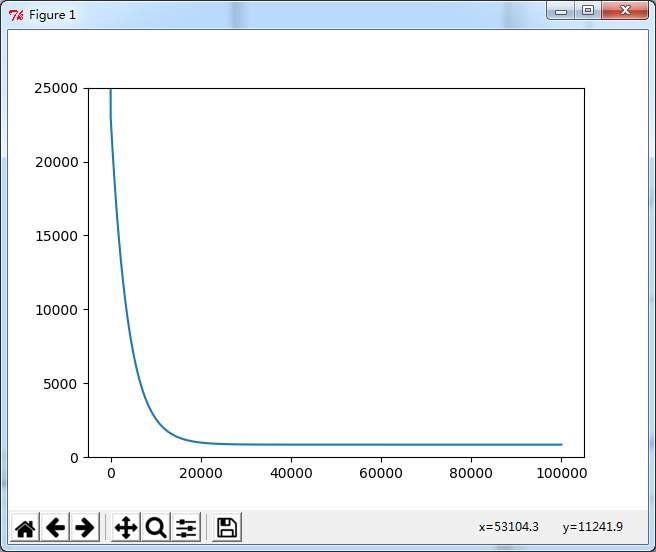

cx = range(len(c.cost))

plt.figure(1)

plt.plot(cx,c.cost)

plt.ylim(0,25000)

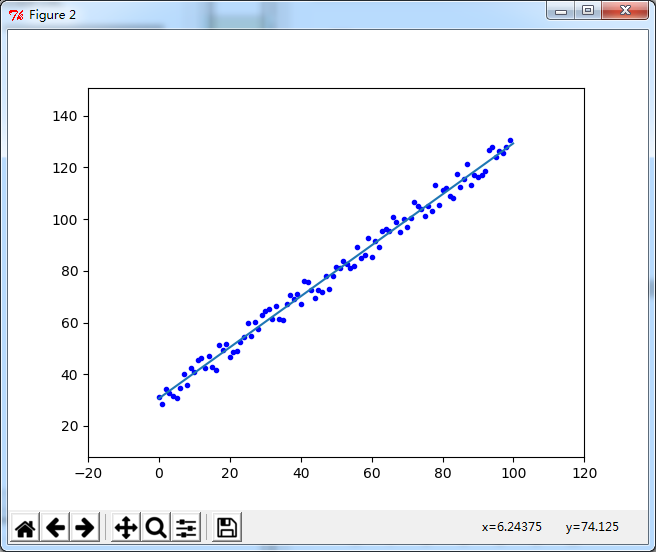

plt.figure(2)

plt.plot(c.genx[:,1],c.geny,'b.')

x = np.arange(0,100,0.1)

y = x * c.theta[1] + c.theta[0]

plt.plot(x,y)

plt.margins(0.2)

plt.show()

圖1. 迭代過程中的誤差cost

感謝你能夠認真閱讀完這篇文章,希望小編分享的“Python如何實現線性回歸和批量梯度下降法”這篇文章對大家有幫助,同時也希望大家多多支持億速云,關注億速云行業資訊頻道,更多相關知識等著你來學習!

免責聲明:本站發布的內容(圖片、視頻和文字)以原創、轉載和分享為主,文章觀點不代表本網站立場,如果涉及侵權請聯系站長郵箱:is@yisu.com進行舉報,并提供相關證據,一經查實,將立刻刪除涉嫌侵權內容。