您好,登錄后才能下訂單哦!

您好,登錄后才能下訂單哦!

小編給大家分享一下Python+OpenCV內置方法如何實現行人檢測,相信大部分人都還不怎么了解,因此分享這篇文章給大家參考一下,希望大家閱讀完這篇文章后大有收獲,下面讓我們一起去了解一下吧!

您是否知道 OpenCV 具有執行行人檢測的內置方法?

OpenCV 附帶一個預訓練的 HOG + 線性 SVM 模型,可用于在圖像和視頻流中執行行人檢測。

今天我們使用Opencv自帶的模型實現對視頻流中的行人檢測,只需打開一個新文件,將其命名為 detect.py ,然后加入代碼:

# import the necessary packages from __future__ import print_function import numpy as np import argparse import cv2 import os

導入需要的包,然后定義項目需要的方法。

def nms(boxes, probs=None, overlapThresh=0.3):

# if there are no boxes, return an empty list

if len(boxes) == 0:

return []

# if the bounding boxes are integers, convert them to floats -- this

# is important since we'll be doing a bunch of divisions

if boxes.dtype.kind == "i":

boxes = boxes.astype("float")

# initialize the list of picked indexes

pick = []

# grab the coordinates of the bounding boxes

x1 = boxes[:, 0]

y1 = boxes[:, 1]

x2 = boxes[:, 2]

y2 = boxes[:, 3]

# compute the area of the bounding boxes and grab the indexes to sort

# (in the case that no probabilities are provided, simply sort on the

# bottom-left y-coordinate)

area = (x2 - x1 + 1) * (y2 - y1 + 1)

idxs = y2

# if probabilities are provided, sort on them instead

if probs is not None:

idxs = probs

# sort the indexes

idxs = np.argsort(idxs)

# keep looping while some indexes still remain in the indexes list

while len(idxs) > 0:

# grab the last index in the indexes list and add the index value

# to the list of picked indexes

last = len(idxs) - 1

i = idxs[last]

pick.append(i)

# find the largest (x, y) coordinates for the start of the bounding

# box and the smallest (x, y) coordinates for the end of the bounding

# box

xx1 = np.maximum(x1[i], x1[idxs[:last]])

yy1 = np.maximum(y1[i], y1[idxs[:last]])

xx2 = np.minimum(x2[i], x2[idxs[:last]])

yy2 = np.minimum(y2[i], y2[idxs[:last]])

# compute the width and height of the bounding box

w = np.maximum(0, xx2 - xx1 + 1)

h = np.maximum(0, yy2 - yy1 + 1)

# compute the ratio of overlap

overlap = (w * h) / area[idxs[:last]]

# delete all indexes from the index list that have overlap greater

# than the provided overlap threshold

idxs = np.delete(idxs, np.concatenate(([last],

np.where(overlap > overlapThresh)[0])))

# return only the bounding boxes that were picked

return boxes[pick].astype("int")

image_types = (".jpg", ".jpeg", ".png", ".bmp", ".tif", ".tiff")

def list_images(basePath, contains=None):

# return the set of files that are valid

return list_files(basePath, validExts=image_types, contains=contains)

def list_files(basePath, validExts=None, contains=None):

# loop over the directory structure

for (rootDir, dirNames, filenames) in os.walk(basePath):

# loop over the filenames in the current directory

for filename in filenames:

# if the contains string is not none and the filename does not contain

# the supplied string, then ignore the file

if contains is not None and filename.find(contains) == -1:

continue

# determine the file extension of the current file

ext = filename[filename.rfind("."):].lower()

# check to see if the file is an image and should be processed

if validExts is None or ext.endswith(validExts):

# construct the path to the image and yield it

imagePath = os.path.join(rootDir, filename)

yield imagePath

def resize(image, width=None, height=None, inter=cv2.INTER_AREA):

dim = None

(h, w) = image.shape[:2]

# 如果高和寬為None則直接返回

if width is None and height is None:

return image

# 檢查寬是否是None

if width is None:

# 計算高度的比例并并按照比例計算寬度

r = height / float(h)

dim = (int(w * r), height)

# 高為None

else:

# 計算寬度比例,并計算高度

r = width / float(w)

dim = (width, int(h * r))

resized = cv2.resize(image, dim, interpolation=inter)

# return the resized image

return resizednms函數:非極大值抑制。

list_images:讀取圖片。

resize:等比例改變大小。

# construct the argument parse and parse the arguments

ap = argparse.ArgumentParser()

ap.add_argument("-i", "--images", default='test1', help="path to images directory")

args = vars(ap.parse_args())

# 初始化 HOG 描述符/人物檢測器

hog = cv2.HOGDescriptor()

hog.setSVMDetector(cv2.HOGDescriptor_getDefaultPeopleDetector())定義輸入圖片的文件夾路徑。

初始化HOG檢測器。

# loop over the image paths

for imagePath in list_images(args["images"]):

# 加載圖像并調整其大小以

# (1)減少檢測時間

# (2)提高檢測精度

image = cv2.imread(imagePath)

image = resize(image, width=min(400, image.shape[1]))

orig = image.copy()

print(image)

# detect people in the image

(rects, weights) = hog.detectMultiScale(image, winStride=(4, 4),

padding=(8, 8), scale=1.05)

# draw the original bounding boxes

print(rects)

for (x, y, w, h) in rects:

cv2.rectangle(orig, (x, y), (x + w, y + h), (0, 0, 255), 2)

# 使用相當大的重疊閾值對邊界框應用非極大值抑制,以嘗試保持仍然是人的重疊框

rects = np.array([[x, y, x + w, y + h] for (x, y, w, h) in rects])

pick = nms(rects, probs=None, overlapThresh=0.65)

# draw the final bounding boxes

for (xA, yA, xB, yB) in pick:

cv2.rectangle(image, (xA, yA), (xB, yB), (0, 255, 0), 2)

# show some information on the number of bounding boxes

filename = imagePath[imagePath.rfind("/") + 1:]

print("[INFO] {}: {} original boxes, {} after suppression".format(

filename, len(rects), len(pick)))

# show the output images

cv2.imshow("Before NMS", orig)

cv2.imshow("After NMS", image)

cv2.waitKey(0)遍歷 --images 目錄中的圖像。

然后,將圖像調整為最大寬度為 400 像素。嘗試減少圖像尺寸的原因有兩個:

減小圖像大小可確保需要評估圖像金字塔中的滑動窗口更少(即從線性 SVM 中提取 HOG 特征,然后將其傳遞給線性 SVM),從而減少檢測時間(并提高整體檢測吞吐量)。

調整我們的圖像大小也提高了我們行人檢測的整體準確性(即更少的誤報)。

通過調用 hog 描述符的 detectMultiScale 方法,檢測圖像中的行人。 detectMultiScale 方法構造了一個比例為1.05 的圖像金字塔,滑動窗口步長分別為x 和y 方向的(4, 4) 個像素。

滑動窗口的大小固定為 64 x 128 像素,正如開創性的 Dalal 和 Triggs 論文《用于人體檢測的定向梯度直方圖》所建議的那樣。 detectMultiScale 函數返回 rects 的 2 元組,或圖像中每個人的邊界框 (x, y) 坐標和 weights ,SVM 為每次檢測返回的置信度值。

較大的尺度大小將評估圖像金字塔中的較少層,這可以使算法運行得更快。然而,規模太大(即圖像金字塔中的層數較少)會導致行人無法被檢測到。同樣,過小的比例尺會顯著增加需要評估的圖像金字塔層的數量。這不僅會造成計算上的浪費,還會顯著增加行人檢測器檢測到的誤報數量。也就是說,在執行行人檢測時,比例是要調整的最重要的參數之一。我將在以后的博客文章中對每個參數進行更徹底的審查以檢測到多尺度。

獲取初始邊界框并將它們繪制在圖像上。

但是,對于某些圖像,您會注意到每個人檢測到多個重疊的邊界框。

在這種情況下,我們有兩個選擇。我們可以檢測一個邊界框是否完全包含在另一個邊界框內。或者我們可以應用非最大值抑制并抑制與重要閾值重疊的邊界框。

應用非極大值抑制后,得到最終的邊界框,然后輸出圖像。

運行結果:

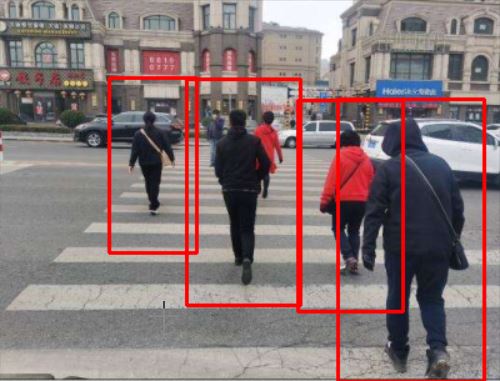

nms前:

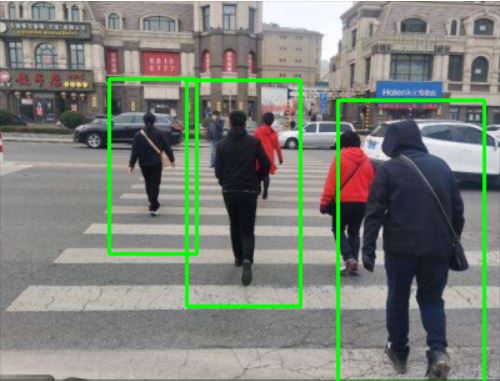

nms后:

以上是“Python+OpenCV內置方法如何實現行人檢測”這篇文章的所有內容,感謝各位的閱讀!相信大家都有了一定的了解,希望分享的內容對大家有所幫助,如果還想學習更多知識,歡迎關注億速云行業資訊頻道!

免責聲明:本站發布的內容(圖片、視頻和文字)以原創、轉載和分享為主,文章觀點不代表本網站立場,如果涉及侵權請聯系站長郵箱:is@yisu.com進行舉報,并提供相關證據,一經查實,將立刻刪除涉嫌侵權內容。