您好,登錄后才能下訂單哦!

您好,登錄后才能下訂單哦!

不懂keras讀取h5文件load_weights、load的操作方法?其實想解決這個問題也不難,下面讓小編帶著大家一起學習怎么去解決,希望大家閱讀完這篇文章后大所收獲。

關于保存h6模型、權重網上的示例非常多,也非常簡單。主要有以下兩個函數:

1、keras.models.load_model() 讀取網絡、權重

2、keras.models.load_weights() 僅讀取權重

load_model代碼包含load_weights的代碼,區別在于load_weights時需要先有網絡、并且load_weights需要將權重數據寫入到對應網絡層的tensor中。

下面以resnet50加載h6權重為例,示例代碼如下

import keras from keras.preprocessing import image import numpy as np from network.resnet50 import ResNet50 #修改過,不加載權重(默認官方加載亦可) model = ResNet50() # 參數默認 by_name = Fasle, 否則只讀取匹配的權重 # 這里h6的層和權重文件中層名是對應的(除input層) model.load_weights(r'\models\resnet50_weights_tf_dim_ordering_tf_kernels_v2.h6')

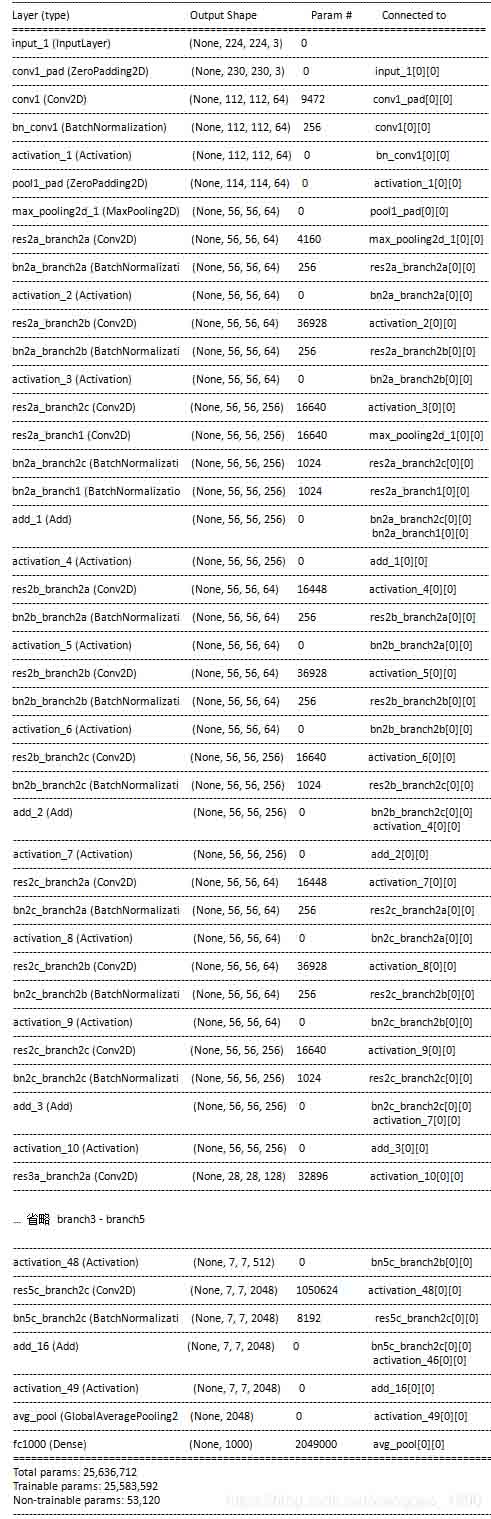

模型通過 model.summary()輸出

一、模型加載權重 load_weights()

def load_weights(self, filepath, by_name=False, skip_mismatch=False, reshape=False):

if h6py is None:

raise ImportError('`load_weights` requires h6py.')

with h6py.File(filepath, mode='r') as f:

if 'layer_names' not in f.attrs and 'model_weights' in f:

f = f['model_weights']

if by_name:

saving.load_weights_from_hdf5_group_by_name(

f, self.layers, skip_mismatch=skip_mismatch,reshape=reshape)

else:

saving.load_weights_from_hdf5_group(f, self.layers, reshape=reshape)這里關心函數saving.load_weights_from_hdf5_group(f, self.layers, reshape=reshape)即可,參數 f 傳遞了一個h6py文件對象。

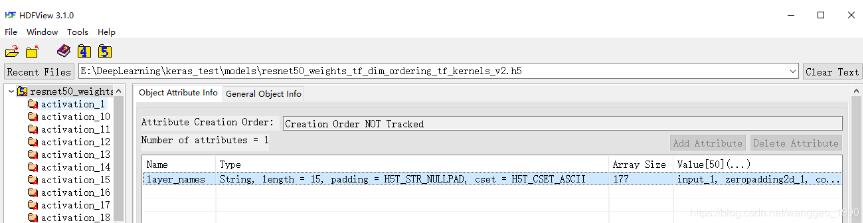

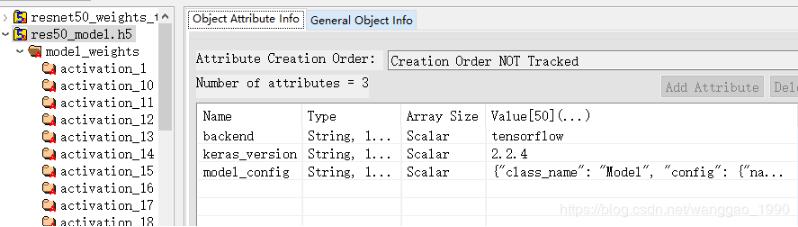

讀取h6文件使用 h6py 包,簡單使用HDFView看一下resnet50的權重文件。

resnet50_v2 這個權重文件,僅一個attr “layer_names”, 該attr包含177個string的Array,Array中每個元素就是層的名字(這里是嚴格對應在keras進行保存權重時網絡中每一層的name值,且層的順序也嚴格對應)。

對于每一個key(層名),都有一個屬性"weights_names",(value值可能為空)。

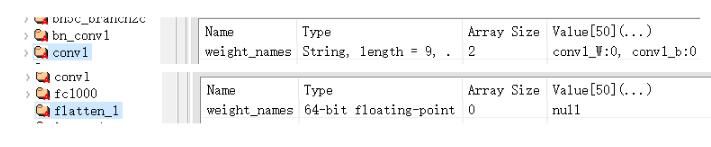

例如:

conv1的"weights_names"有"conv1_W:0"和"conv1_b:0",

flatten_1的"weights_names"為null。

這里就簡單介紹,后面在代碼中說明h6py如何讀取權重數據。

二、從hdf5文件中加載權重 load_weights_from_hdf5_group()

1、找出keras模型層中具有weight的Tensor(tf.Variable)的層

def load_weights_from_hdf5_group(f, layers, reshape=False): # keras模型resnet50的model.layers的過濾 # 僅保留layer.weights不為空的層,過濾掉無學習參數的層 filtered_layers = [] for layer in layers: weights = layer.weights if weights: filtered_layers.append(layer)

filtered_layers為當前模型resnet50過濾(input、paddind、activation、merge/add、flastten等)層后剩下107層的list

2、從hdf5文件中獲取包含權重數據的層的名字

前面通過HDFView看過每一層有一個[“weight_names”]屬性,如果不為空,就說明該層存在權重數據。

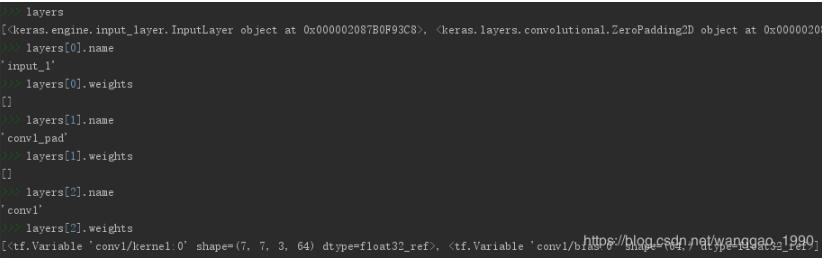

先看一下控制臺對h6py對象f的基本操作(需要的去查看相關數據結構定義):

>>> f <HDF5 file "resnet50_weights_tf_dim_ordering_tf_kernels_v2.h6" (mode r)> >>> f.filename 'E:\\DeepLearning\\keras_test\\models\\resnet50_weights_tf_dim_ordering_tf_kernels_v2.h6' >>> f.name '/' >>> f.attrs.keys() # f屬性列表 # <KeysViewHDF5 ['layer_names']> >>> f.keys() #無順序 <KeysViewHDF5 ['activation_1', 'activation_10', 'activation_11', 'activation_12', ...,'activation_8', 'activation_9', 'avg_pool', 'bn2a_branch2', 'bn2a_branch3a', ...,'res5c_branch3a', 'res5c_branch3b', 'res5c_branch3c', 'zeropadding2d_1']> >>> f.attrs['layer_names'] #*** 有順序, 和summary()對應 **** array([b'input_1', b'zeropadding2d_1', b'conv1', b'bn_conv1', b'activation_1', b'maxpooling2d_1', b'res2a_branch3a', ..., b'res2a_branch2', b'bn2a_branch3c', b'bn2a_branch2', b'merge_1', b'activation_47', b'res5c_branch3b', b'bn5c_branch3b', ..., b'activation_48', b'res5c_branch3c', b'bn5c_branch3c', b'merge_16', b'activation_49', b'avg_pool', b'flatten_1', b'fc1000'], dtype='|S15') >>> f['input_1'] <HDF5 group "/input_1" (0 members)> >>> f['input_1'].attrs.keys() # 在keras中,每一個層都有‘weight_names'屬性 # <KeysViewHDF5 ['weight_names']> >>> f['input_1'].attrs['weight_names'] # input層無權重 # array([], dtype=float64) >>> f['conv1'] <HDF5 group "/conv1" (2 members)> >>> f['conv1'].attrs.keys() <KeysViewHDF5 ['weight_names']> >>> f['conv1'].attrs['weight_names'] # conv層有權重w、b # array([b'conv1_W:0', b'conv1_b:0'], dtype='|S9')

從文件中讀取具有權重數據的層的名字列表

# 獲取后hdf5文本文件中層的名字,順序對應

layer_names = load_attributes_from_hdf5_group(f, 'layer_names')

#上一句實現 layer_names = [n.decode('utf8') for n in f.attrs['layer_names']]

filtered_layer_names = []

for name in layer_names:

g = f[name]

weight_names = load_attributes_from_hdf5_group(g, 'weight_names')

#上一句實現 weight_names = [n.decode('utf8') for n in f[name].attrs['weight_names']]

#保留有權重層的名字

if weight_names:

filtered_layer_names.append(name)

layer_names = filtered_layer_names

# 驗證模型中有有權重tensor的層 與 從h6中讀取有權重層名字的 數量 保持一致。

if len(layer_names) != len(filtered_layers):

raise ValueError('You are trying to load a weight file '

'containing ' + str(len(layer_names)) +

' layers into a model with ' +

str(len(filtered_layers)) + ' layers.')3、從hdf5文件中讀取的權重數據、和keras模型層tf.Variable打包對應

先看一下權重數據、層的權重變量(Tensor tf.Variable)對象,以conv1為例

>>> f['conv1']['conv1_W:0'] # conv1_W:0 權重數據數據集 <HDF5 dataset "conv1_W:0": shape (7, 7, 3, 64), type "<f4"> >>> f['conv1']['conv1_W:0'].value # conv1_W:0 權重數據的值, 是一個標準的4d array array([[[[ 2.82526277e-02, -1.18737184e-02, 1.51488732e-03, ..., -1.07003953e-02, -5.27982824e-02, -1.36667420e-03], [ 5.86827798e-03, 5.04415408e-02, 3.46324709e-03, ..., 1.01423981e-02, 1.39493728e-02, 1.67549420e-02], [-2.44090753e-03, -4.86173332e-02, 2.69966386e-03, ..., -3.44439060e-04, 3.48098315e-02, 6.28910400e-03]], [[ 1.81872323e-02, -7.20698107e-03, 4.80302610e-03, ..., …. ]]]]) >>> conv1_w = np.asarray(f['conv1']['conv1_W:0']) # 直接轉換成numpy格式 >>> conv1_w.shape (7, 7, 3, 64) # 卷積層 >>> filtered_layers[0] <keras.layers.convolutional.Conv2D object at 0x000001F7487C0E10> >>> filtered_layers[0].name 'conv1' >>> filtered_layers[0].input <tf.Tensor 'conv1_pad/Pad:0' shape=(?, 230, 230, 3) dtype=float32> #卷積層權重數據 >>> filtered_layers[0].weights [<tf.Variable 'conv1/kernel:0' shape=(7, 7, 3, 64) dtype=float32_ref>, <tf.Variable 'conv1/bias:0' shape=(64,) dtype=float32_ref>]

將模型權重數據變量Tensor(tf.Variable)、讀取的權重數據打包對應,便于后續將數據寫入到權重變量中.

weight_value_tuples = []

# 枚舉過濾后的層

for k, name in enumerate(layer_names):

g = f[name]

weight_names = load_attributes_from_hdf5_group(g, 'weight_names')

# 獲取文件中當前層的權重數據list, 數據類型轉換為numpy array

weight_values = [np.asarray(g[weight_name]) for weight_name in weight_names]

# 獲取keras模型中層具有的權重數據tf.Variable個數

layer = filtered_layers[k]

symbolic_weights = layer.weights

# 權重數據預處理

weight_values = preprocess_weights_for_loading(layer, weight_values,

original_keras_version, original_backend,reshape=reshape)

# 驗證權重數據、tf.Variable數據是否相同

if len(weight_values) != len(symbolic_weights):

raise ValueError('Layer #' + str(k) + '(named "' + layer.name +

'" in the current model) was found to correspond to layer ' + name +

' in the save file. However the new layer ' + layer.name + ' expects ' +

str(len(symbolic_weights)) + 'weights, but the saved weights have ' +

str(len(weight_values)) + ' elements.')

# tf.Variable 和 權重數據 打包

weight_value_tuples += zip(symbolic_weights, weight_values)4、將讀取的權重數據寫入到層的權重變量中

在3中已經對應好每一層的權重變量Tensor和權重數據,后面將使用tensorflow的sess.run方法進新寫入,后面一行代碼。

K.batch_set_value(weight_value_tuples)

實際實現

def batch_set_value(tuples):

if tuples:

assign_ops = []

feed_dict = {}

for x, value in tuples:

# 獲取權重數據類型

value = np.asarray(value, dtype=dtype(x))

tf_dtype = tf.as_dtype(x.dtype.name.split('_')[0])

if hasattr(x, '_assign_placeholder'):

assign_placeholder = x._assign_placeholder

assign_op = x._assign_op

else:

# 權重的tf.placeholder

assign_placeholder = tf.placeholder(tf_dtype, shape=value.shape)

# 對權重變量Tensor的賦值 assign的operation

assign_op = x.assign(assign_placeholder)

x._assign_placeholder = assign_placeholder # 用處?

x._assign_op = assign_op # 用處?

assign_ops.append(assign_op)

feed_dict[assign_placeholder] = value

# 利用tensorflow的tf.Session().run()對tensor進行assign批次賦值

get_session().run(assign_ops, feed_dict=feed_dict)至此,先有網絡模型,后從h6中加載權重文件結束。后面就可以直接利用模型進行predict了。

三、模型加載 load_model()

這里基本和前面類似,多了一個加載網絡而已,后面的權重加載方式一樣。

首先將前面加載權重的模型使用 model.save()保存為res50_model.h6,使用HDFView查看

屬性成了3個,backend, keras_version和model_config,用于說明模型文件由某種后端生成,后端版本,以及json格式的網絡模型結構。

有一個key鍵"model_weights", 相較于屬性有前面的h6模型,屬性多了2個為['backend', 'keras_version', 'layer_names'] 該key鍵下面的鍵值是一個list, 和前面的h6模型的權重數據完全一致。

類似的,先利用python代碼查看下文件結構

>>> ff <HDF5 file "res50_model.h6" (mode r)> >>> ff.attrs.keys() <KeysViewHDF5 ['backend', 'keras_version', 'model_config']> >>> ff.keys() <KeysViewHDF5 ['model_weights']> >>> ff['model_weights'].attrs.keys() ## ff['model_weights']有三個屬性 <KeysViewHDF5 ['backend', 'keras_version', 'layer_names']> >>> ff['model_weights'].keys() ## 無順序 <KeysViewHDF5 ['activation_1', 'activation_10', 'activation_11', 'activation_12', …, 'avg_pool', 'bn2a_branch2', 'bn2a_branch3a', 'bn2a_branch3b', …, 'bn5c_branch3c', 'bn_conv1', 'conv1', 'conv1_pad', 'fc1000', 'input_1', …, 'c_branch3a', 'res5c_branch3b', 'res5c_branch3c']> >>> ff['model_weights'].attrs['layer_names'] ## 有順序 array([b'input_1', b'conv1_pad', b'conv1', b'bn_conv1', b'activation_1', b'pool1_pad', b'max_pooling2d_1', b'res2a_branch3a', b'bn2a_branch3a', b'activation_2', b'res2a_branch3b', ... 省略 b'activation_48', b'res5c_branch3c', b'bn5c_branch3c', b'add_16', b'activation_49', b'avg_pool', b'fc1000'], dtype='|S15')

1、加載模型主函數load_model

def load_model(filepath, custom_objects=None, compile=True):

if h6py is None:

raise ImportError('`load_model` requires h6py.')

model = None

opened_new_file = not isinstance(filepath, h6py.Group)

# h6加載后轉換為一個 h6dict 類,編譯通過鍵取值

f = h6dict(filepath, 'r')

try:

# 序列化并compile

model = _deserialize_model(f, custom_objects, compile)

finally:

if opened_new_file:

f.close()

return model2、序列化并編譯_deserialize_model

函數def _deserialize_model(f, custom_objects=None, compile=True)的代碼顯示主要部分

第一步,加載網絡結構,實現完全同keras.models.model_from_json()

# 從h6中讀取網絡結構的json描述字符串

model_config = f['model_config']

model_config = json.loads(model_config.decode('utf-8'))

# 根據json構建網絡模型結構

model = model_from_config(model_config, custom_objects=custom_objects)第二步,加載網絡權重,完全同model.load_weights()

# 獲取有順序的網絡層名, 網絡層 model_weights_group = f['model_weights'] layer_names = model_weights_group['layer_names'] layers = model.layers # 過濾 有權重Tensor的層 for layer in layers: weights = layer.weights if weights: filtered_layers.append(layer) # 過濾有權重的數據 filtered_layer_names = [] for name in layer_names: layer_weights = model_weights_group[name] weight_names = layer_weights['weight_names'] if weight_names: filtered_layer_names.append(name) # 打包數據 weight_value_tuples weight_value_tuples = [] for k, name in enumerate(layer_names): layer_weights = model_weights_group[name] weight_names = layer_weights['weight_names'] weight_values = [layer_weights[weight_name] for weight_name in weight_names] layer = filtered_layers[k] symbolic_weights = layer.weights weight_values = preprocess_weights_for_loading(...) weight_value_tuples += zip(symbolic_weights, weight_values) # 批寫入 K.batch_set_value(weight_value_tuples)

第三步,compile并返回模型

正常情況,模型網路建立、加載權重后 compile之后就完成。若還有其他設置,則可以再進行額外的處理。(模型訓練后save會有額外是參數設置)。

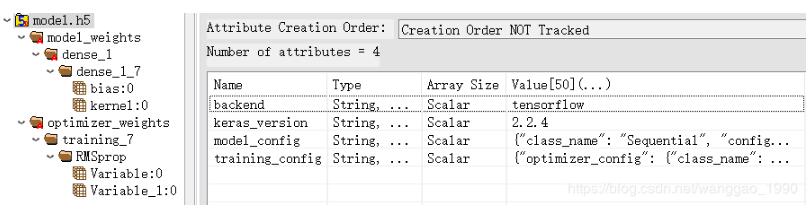

例如,一個只有dense層的網路訓練保存后查看,屬性多了"training_config",鍵多了"optimizer_weights",如下圖。

當前res50_model.h6沒有額外的參數設置。

處理代碼如下

if compile:

training_config = f.get('training_config')

if training_config is None:

warnings.warn('No training configuration found in save file: '

'the model was *not* compiled. Compile it manually.')

return model

training_config = json.loads(training_config.decode('utf-8'))

optimizer_config = training_config['optimizer_config']

optimizer = optimizers.deserialize(optimizer_config, custom_objects=custom_objects)

# Recover loss functions and metrics.

loss = convert_custom_objects(training_config['loss'])

metrics = convert_custom_objects(training_config['metrics'])

sample_weight_mode = training_config['sample_weight_mode']

loss_weights = training_config['loss_weights']

# Compile model.

model.compile(optimizer=optimizer, loss=loss, metrics=metrics,

loss_weights=loss_weights, sample_weight_mode=sample_weight_mode)

# Set optimizer weights.

if 'optimizer_weights' in f:

# Build train function (to get weight updates).

model._make_train_function()

optimizer_weights_group = f['optimizer_weights']

optimizer_weight_names = [

n.decode('utf8') for n in ptimizer_weights_group['weight_names']]

optimizer_weight_values = [

optimizer_weights_group[n] for n in optimizer_weight_names]

try:

model.optimizer.set_weights(optimizer_weight_values)

except ValueError:

warnings.warn('Error in loading the saved optimizer state. As a result,'

'your model is starting with a freshly initialized optimizer.')更多相關知識內容:

Tensorflow2.0 tf.keras.Model.load_weights() 報錯的處理方法

調用Kears中kears.model.load_model方法會遇到哪些問題

感謝你能夠認真閱讀完這篇文章,希望小編分享keras讀取h5文件load_weights、load的操作方法內容對大家有幫助,同時也希望大家多多支持億速云,關注億速云行業資訊頻道,遇到問題就找億速云,詳細的解決方法等著你來學習!

免責聲明:本站發布的內容(圖片、視頻和文字)以原創、轉載和分享為主,文章觀點不代表本網站立場,如果涉及侵權請聯系站長郵箱:is@yisu.com進行舉報,并提供相關證據,一經查實,將立刻刪除涉嫌侵權內容。